In the rigging category i will talk about character setup , and body deformation.

Rigging a 3d character is to turn a sculpture into an animatable puppet, a lot of concept is shared with stop motion puppet animation:

A skeletal structure is created and serves two function: motion and deformation.

By default maya gives a fair amount of tools to tackle the creation of a skin behavior: cluster, lattice, wrap and wire deformer, blendshape and skinCluster.

Each one can be combined and layered to achieve the desired effect.

Nowadays, the most common workflow in television and featured film animation( when time and budget allows it ) is to design a first pass for the body deformation with a skinCluster and to enhanced it with a pose space deformation system.

The concept is to drive the effect of a set of corrective shapes when a joint reach a pose:

- In 3dStudioMax pose space deformation is possible through the use of the skinmorph modifier.

- In maya we can use Michael Comet’s open source pose space deformer.

Most of the time these two tools works as expected. The correctives shape extraction works flawlessly, but the weight interpolation and mixing tends to be bit unreliable for the most complex case.

The second consideration is the deformation order: the deformer sits on top of the deformation stack in max and after the skinCluster Node in the deformation history in maya, this is not a bad design choice

,just a personal taste.

For this reasons I decided to write a script to extract the corrective shapes, theses shapes will then be used in a regular blendshape Node before a skinCluster.

There is a wealth of information on the skincluster algorithm : its similar to the linear blend skinning or linear vector blending:

A vertex is transformed by N number of joints , each influences have a weight value that controls how rigidly that vertex is attached to a bone. Most of the time to have predictable result the sum of all weight of a vertex is no more than 1.0.

In a more practical standpoint the algorithm attach rigidly the vertex to each influencing bones and the final position is the weighted sum of all the resulting vector.

transformStorage is a vectorArray

transformStorage.clear()

for each influencing bones:

transformStorage.append( ( world space vertex position * currentJointBindPose* currentJointTransformation * currentJointweight ) -world space vertex position )

finalvector = transformStorage[0]

if transformStorage.length() > 1:

for j in range(1 , transformStorage.length()):

finalvector = finalvector + transformStorage[j]

finalVertexPosition = world space vertex position + finalvector

In the pseudo code above we assume that it is a good practice and a prerequisite to freeze a mesh transformation and all its parent hierarchy the result is that vertex position in object space is the same as in world space.

currentJointBindPose: This matrix will transform our current vertex from world to object space, attaching this point to the joint .

This value can be retrieve in the skinCluster node in the multi-attribute bindPrematrix: at bindPose maya store for each influence the inverse parent matrix of the related transform node. One interesting point is that this array is connectable: we can adjust the binding position of a joint without loosing the skinning configuration by plugging its inverse parent matrix in the right slot

currentJointTransformation :we operate in world space, the current Joint world space matrix is plugged in the matrix array attribute of the skinCluster

A point multiplied by a matrix transform it into this matrix space .To retrieve a vector we substract the original vertex point from the transformed vertex point.

currentJointweight :can be found in the compound array attribute weightList

Maya implementation for the current influences weight is quite interesting : we have an array of sparse array where we can found the weight of all the joint that influence this vertex. The array indices is used to determine what matrix attribute to use for the calculation

In mel or python we can retrieve these value with the following command:

skinClNode = 'skinCluster' vertId = 15 # index of the current vertex vertexBonesID = cmds.getAttr( skinClNode + '.weightList[%s].weights' % vertId, mi=True)

the -mi flag of the getAttr command return a list of indices that is used in an array attribute , in the maya api we can call Maya.OpenMaya.MFnSkinCluster.getWeights ( path, components, influenceIndices, weights ) to retrieve in one step the weight and indices for a list of component.

This compound array is connectable and we can plug a whole array in it to store, export skinning informations without extracting any single value.

Another creative use of this properties is that we can animate each influences weight value for a vertex component or better create and assign skinning information to topology variable polygonal mesh.

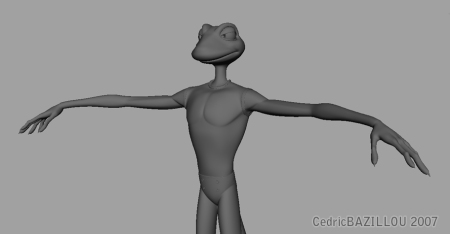

After studying the skincluster algorithm the next step was to define a set of rules to extract our corrective shape.To illustrate the process i will use one character of an old TV commercials we did at hanuman studio for a bowling playhouse.His name is Zazar and they will be with his counterpart Leon the main characters of an animated TV series.

- As design choice my first rule was to restrict the usage of my script to polygonal object that are deformed by a skincluster.

- The second restriction was to limit the type of influences in the skincluster to regular transform nodes like joint, locator or group.

- All deforming mesh transformation are frozen , their pivot placed at the origin and parent under a group that do not inherits transform.

- The last rule was to impose to place the blendshape Node just before the skinCluster

Like any pose space deformer system , the user deals with a two step process:

- rotate and/or translate one or several joints present in the skinCluster to pose a part of the body.

- sculpt an independent copy of this mesh to define the correct look of the skin

Once the target sculpture satisfy the user needs we can start the calculation loop.For optimization purpose each vertex in the skinned mesh(1) is checked against the corresponding vertex ID in the target mesh(2) , and if the the distance between them is greater than a minimal value we can reverse the target mesh tweaking back to the skinned mesh object space(3).

#In order to reverse the sculpted independent shape back to the original skinned mesh object space we must concatenate

#the matrix of the influences joint and transform by its inverse the current vertex position of the sculpted mesh:

matrixStorage is a MatrixArray

matrixStorage.clear()

for each influencing bones:

matrixStorage.append( currentJointBindPose* currentJointTransformation * currentJointweight )

finalMatrix = matrixStorage[0]

if finalMatrix.length() > 1:

for j in range(1 , finalMatrix.length()):

finalMatrix = finalMatrix + matrixStorage[j]

reverseMatrixforcurrentVertex = finalMatrix.inverse()

finalVertexPosition = world space vertex position * reverseMatrixforcurrentVertex

One interesting thing i learned when the first working draft for this script was completed was that to extract and mix several corrective shapes one extra step was necessary: negate the contribution of the blendshape node from the extracted corrective morph.

Below we can see a UI to create and manage corrective blendshapes

Really trying to understand the maths of taking the target back into the bind space – making my head hurt. How do you deal with weights?

I have just tried to do really basic thing: in my setup corrective shape are supposed to be additive and I wrote a node to trigger each pose weight sequentially( a kind of spherical coordinate but wrap around my preference ). @math : Is the current pseudo code with matrix concatenation readable?

Sort of, I kinda get you have to transform the target into the space of the bind – I just don’t know how you include the weights. I need to think on this overnight. I’m thinking much broader in terms of n-space dimensions. Just stuck on getting a target pose into the bind space.

Ok, my brain cracked like a coconut on the walk home and I understand it now hopefully! – Basically an transform inverse of the weighted joints transforms multiplied into the bind pose.

Thanks for you help, i’ll try and post some results.

Sweet if it was useful, I manage to understand it as some kind of vector addition the first time I was working on it.

How are you creating this value: ( currentJointBindPose* currentJointTransformation * currentJointweight ) ?

Do you break the transforms into rotation and translation, sum there weights accordingly then add them back as matrices? e.g

A + B

r0 = slerp a.rotation (quat 0 0 0 1) jointWeight

t0 = a.worldtranslation * jointWeight

(r0 as matrix3) * transMatrix t0

?

Think i got it 🙂

Not really, this a the most shameful type of interpolation: just weighted vector addition . lets say that your vertex is rigidly bind to a joint , the final transformation of a vertex is defined by multiplying each vector by its weight and adding the vector together. Hence the name linear vector blending which is much more evocative than linear blend skinning or smooth skinning…

Think im getting it now..The inverse was tripping me up.

Perfect, Its just basic vector and matrix, but once you play with it there is no turning back( as your already know ). I was digging last time in comet pose deformer / reader source code but I have a hard time on the radial basis function used to blend poses together. Tweaking curve or interpolation range is kind tiring. Do you deal also with lots of corrective shapes?

We’ll the math is super hack-tastic currently – but it works! As for blending i’m really thinking about n-dimensional space (eigenSpace). With the upper arm you really have a n-space of 6d (+x/-x … -z) – interpolation can be any type (cubic, ease-in etc) and its naturally non-linear. Crucially poses can exist at any point this space.

Poses can also be absolute when you include zero position dimensions. For the shoulder you can essentially break the space into two chunks: direction and spin – if you treat these as your dimensions* your really only doing 1st and second order poses. I.e pose == direction + spin.

N-space math is awesome – simple yet brain crunchingly complex!

yet its just to complex for me for the time being, thus I tried to do a brute force simple stupid linear interpolation: divide space into thinner sector until an acceptable error margin is reached…

Even now my system is based on one postulate: removing spin/twist from the equation: instead of default twist for an articulation I provide a database of state that convey the most logical default pose for a limb.

Without twist shapes , with sectors spanning over 30 degrees in front/rear, up/Down for the arm and shoulder I am already dealing with 164 shapes and If I add 8 shape per pose to cope for twisting ,it will fatten this number exponentially .

What i want to achieve is a way to handle robustly corrective shapes creation/maintenance .

at a pose a corrective shape is a result of several repetitive factor that can be reused and described individually: muscle repelling each over , sliding over hard surface, etc…

In maya or max several tool can do the job( like muscle smart collide, point relaxation etc )but I just find they are too generic.I strive for something like a knuckle deformer or a hip module: a suite of tool which can be use from classic toon to anatomical coherent creatures.

PSD

For the basic data flow, i think i’ll store the vertex positions, there associate bone ids, world transforms and weights offline – or into an attribute. I’ll get the inverse bind pose of the current pose, sculpted pose and do a basic corrective math now that its back in world space:

(vertexWorldPos + sculptedPoseBind) – (vertexWorldPos + currentPoseBind)

This way its analogous to any corrective system – only that i’m putting it back into world space. I don’t have to worry about what poses went into the current ‘state’ of the mesh.

N-Space

As to n-dimensionality crucially the thing to remember is a pose is a product of the dimensions that go into it. And this product of result of the position placed on the weight. I have more on this on my blog.

As to automatically building a corrective or 2nd, 3rd, 4th order etc pose – it would be tricky. You could definitely build a ‘generic’ crease, mass shift builder sort of how Face Robot defines creases using vertex color.

You not dealing some much a rubber tube that always creases the same way – human tissue, muscles etc act in complex ways based on the hierarchic they moved in.

Interesting post as usual . For the implementation I choose to reverse the world sculpted pose target as my goal was to deal with only 1 regular blendshape deformer working before a skinCluster.

@storing useful data: yeah i tried that also: first I use matrix attribute, message connections and even dagPose node.

What I have in mind was not to have procedural/automatic corrective shape: to build these shape even with different design we have essentially the same type of phenomenon( then it just how far we want something intricate or not ), and the workflow is always the same: put into pose try to sculpt a good shape by pushing points, using other deformer and maybe relaxing some point.

My goal is to store this step in an agnostic manner.

Let say when you bent your arm over 120 degrees, you can define a line deformer to control the inner flesh crease, reuse a kind of lattice to push the flesh on the side, isolate in the view a set of vertices /face to better understand the form your are working on, or relax a region by not painting weights but rather defining a gradient from 4 corners attributes.

First of all, thank you for your fantastic blog. 🙂

You can’t imagine how much your work is inspirational for me.

I’m creating a corrective shape extractor for max and I’m stuck, it’s driving me crazy!

I think I’m pretty comfortable with vector math but I’m wasn’t able to negate skinning deformation.

I assume that you do this when you multiply a matrix ( I hope I got it! )

)

(

fn multiplyMatrix Mat val =

(

resPos = Mat.position * val

resRotation = slerp (quat 0 0 0 1) Mat.rotation val

resMat = resRotation as matrix3

resMat[4] = resPos

return resMat

)

)

With a practical example, here’s what I understood:

Say I have an vertex assigined to UpperArm and LowerArm with weights 50/50

// the skinning process

vertexBindPosition = [x,y,z]

In bind pose

(

UpperArmBindPose = UpperArm.transform*inverse(UpperArm.parent.transform)

ForeArmBindPose = ForeArm.transform*inverse(ForeArm.parent.transform)

)

In Pose

(

UpperArmTransformPose = UpperArm.transform*inverse(UpperArm.parent.transform)

ForeArmTransformPose = ForeArm.transform*inverse(ForeArm.parent.transform)

)

//matrices from bind pose to pose to correct

UpperArmDiff = UpperArmTransformPose*inverse(UpperArmBindPose)

ForeArmDiff = ForeArmTransformPose*inverse(ForeArmBindPose)

skinnedVertexPosition = ( (vertexBindPosition*inverse(UpperArm.transform)) * (multiplyMatrix UpperArmDiff 0.5) * UpperArm.parent.transform) +

( (vertexBindPosition*inverse(ForeArm.transform)) * (multiplyMatrix ForeArmDiff 0.5) * ForeArm.parent.transform)

// now reverse things

correctedVertex = [x,y,z]

correctedVertexInBindPose = (correctedVertex*inverse(UpperArmTransformPose)) * (multiplyMatrix (inverse(UpperArmDiff)) 0.5) * UpperArmTransformPose +

(correctedVertex*inverse(ForeArmTransformPose)) * (multiplyMatrix (inverse(ForeArmDiff)) 0.5) * ForeArmTransformPose

Where I’m wrong??!

A useful read! Adapted a similar method for my own pipeline scripts with the help of yourself and a few other sources. One question, did you ever look into making correctives for meshes with DQ weighting added on top of the linear weights?

Pingback: 【转】浅谈 Maya PSD – Pose Space Deformation | cgpengda